Enter password to view case study

Product Design

/

AI

/

Machine Learning

Aurora Copilot

Embedding AI into a complex multi-stakeholder platform

Overview

The copilot is part of the AI layer embedded across Aurora's platform. It lives inside the workflow of senior underwriters, surfacing intelligence where decisions happen. We deliberately chose "copilot" over "autopilot." The AI does not make decisions, it prepares the underwriter to make better ones.

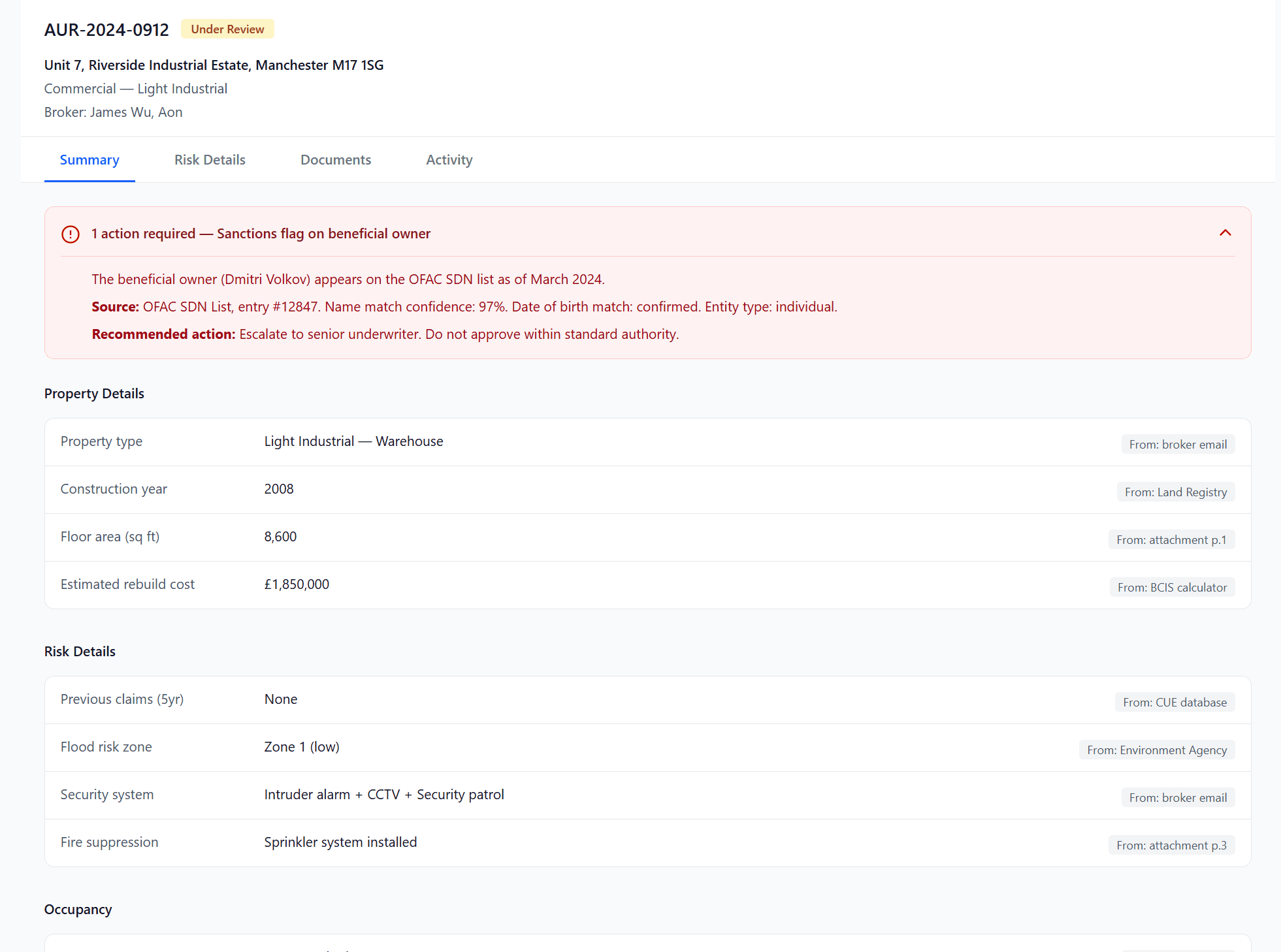

The constraint from day one: under FCA regulation, the AI can surface information but cannot give advice. It can tell an underwriter a rebuild cost is 40% below comparable properties. It cannot say to increase it. Every piece of AI-generated copy in the platform had to respect that boundary.

year

2024 – Current

company

Aurora

role

Lead Product Designer

focus

Product Design

UX Strategy

Rapid prototyping

User testing

What was the AI impact so far?

field correction rate

< 5% (for one product)

STP Rate

52% - 70% (as of Feb 2026)

UW Throughput

480 across 4 risk UWs

the first business problem

Cases stall on missing or unverifiable data.

From discovery, we knew: if underwriters can see what the AI extracted but can't verify where it came from, they'll either send it back to the broker or check manually. Both burn time.

At expectation of 24-48 hour turnaround per case in the industry, that compounds fast.

The issue wouldn't be accuracy. It would be transparency. Even if the AI was right, underwriters wouldn't act without understanding how it got the reasoning, or the source.

So how might we close information gaps fast enough that cases keep moving, and make AI outputs transparent enough that underwriters progress instead of second-guessing.

trust calibration for underwriters

As an underwriter, I want to know whether the AI found a value or inferred one, because those two states require completely different actions from me.

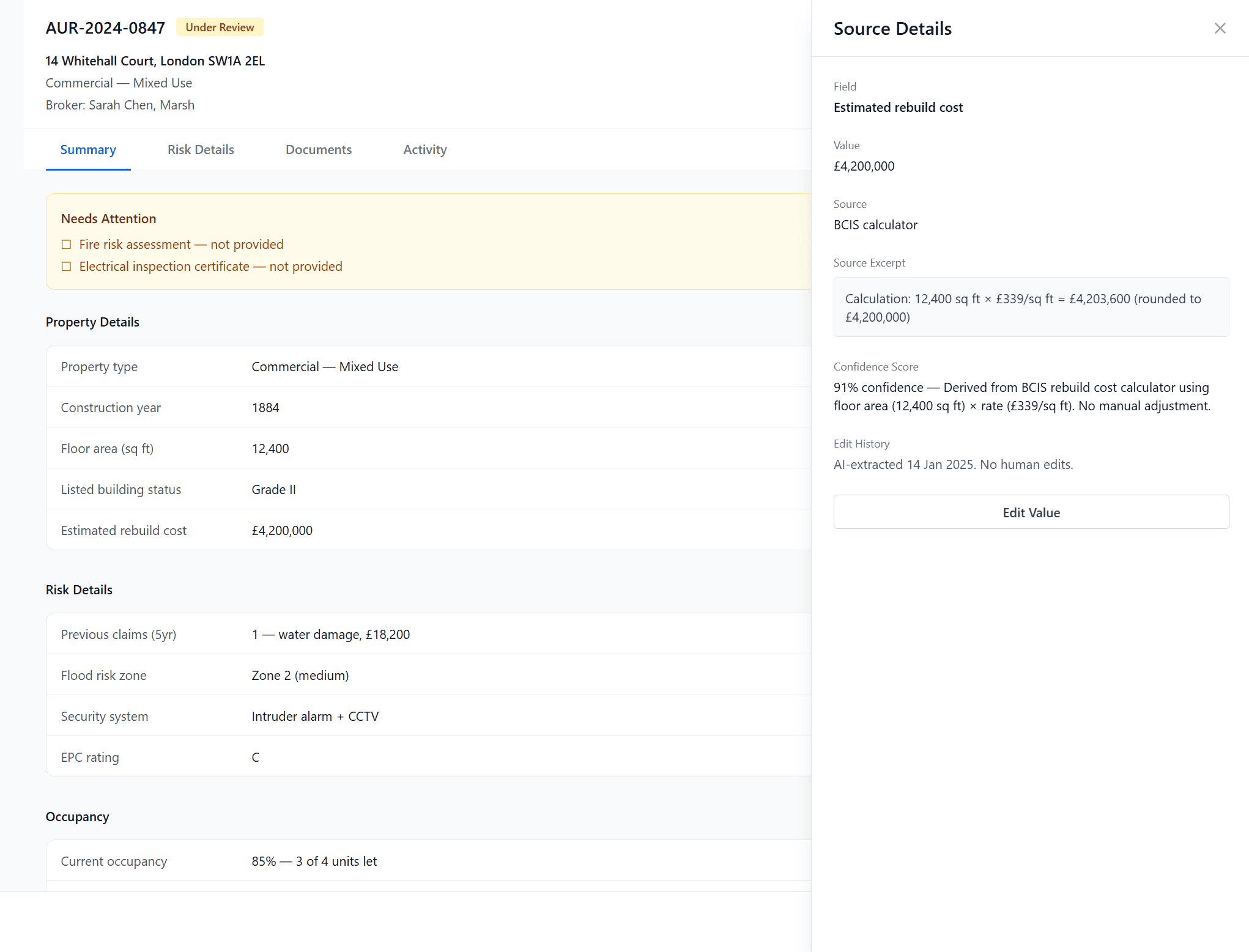

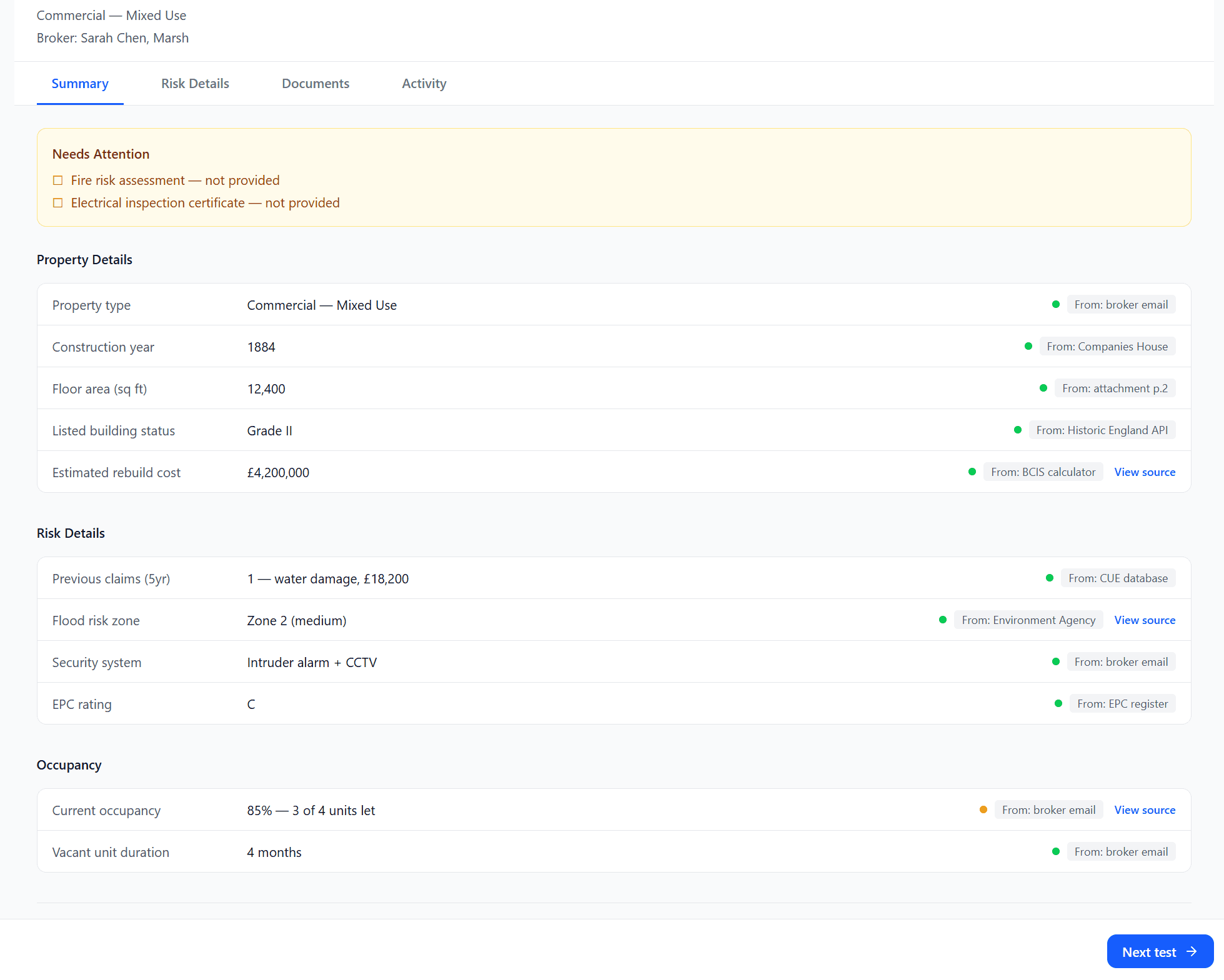

Under data review, each field in the case view carries one of three tags. Each tag tells the UW a different thing and asks for a different action.

Extracted: AI found the value in the document. Source tag links to exact page and paragraph.

Check source: AI inferred the value. The value wasn't explicitly stated. AI mapped it from context.

Risk flag: AI is flagging a concern about the value. The value might be correct, but something needs attention.

the second challenge

Underwriters work across multiple surfaces to make a single risk decision.

Dashboard to triage. Case detail to investigate. Documents to verify. Each surface serves a different part of the decision, but if we kept AI intelligence only showing up in one place, and the underwriter wasn't on that surface at that moment, they would miss it.

The copilot's job: meet the underwriter where they already are with the right intelligence, at the right depth, for what they're doing right now.

The opportunity: How do we match AI intelligence to the underwriter's current mode so it helps, not hinders?

scanning mode

Ambient AI cards

As an underwriter, I want the AI to have already identified what needs my attention so that I'm making decisions, not doing triage again.

The dashboard tells the UW where to go: 4 needs review, 2 in referral, 3 awaiting broker. Market intelligence with cited source and a link to the full analysis. No inputs. the UW scans, prioritises, clicks through.

We originally shipped a chat input on these cards. 3 contextual interviews revealed nobody used it. UWs are triaging at this stage, not investigating.

investigating mode

Contextual intelligence

As an underwriter, I want risk signals surfaced in context, and not buried in a separate page I have to navigate to.

The AI sidebar appears across all surfaces, but its content changes based on where the UW is and what they're looking at. It arrives pre-populated andnever a blank canvas.

the invisible artefacts

Supporting all of these intelligence are FCA regulations, design constraints and guidelines for AI.

FCA regulation: the AI can surface information but cannot give advice.

Outcome

STP: 52% → 70%. The consolidated follow-up and pre-populated referral resolution reduced cases stalling on missing data.

4 risk UWs handle 480 quotes/month. Throughput held as volume scaled.

Quote rate: 75% → 81%. More cases reach quote-ready because broker-side completeness visibility reduced round-trip chasing.

The underwriter copilot (ambient and chat) is currently in pilot phase, being tested across the Aurora platform and used by 4 underwriters in their daily workflow.

Currently field correction rate is tested at < 5% (for one product)

What I learnt

AI confidence is not the same as user confidence. Source provenance builds trust faster than any model accuracy claim.

The interface patterns for showing "why" (progressive disclosure, source links, confidence indicators) were as important as the AI model accuracy itself.

Match AI to cognitive mode. Scanning needs ambient information and links, not inputs. Case investigation needs a pre-populated briefing.